Localised Crepuscular Rays

Crepuscular rays, volumetric rays, or god rays have become a common effect in games. They are used especially in first and third person games – where the player can easily look at the sky – to give the sun, moon, or other bright light sources additional impact and create atmosphere.

Depending on how the effect is used it can emphasize the hot humidity of a rain forest, the creepiness of a foggy swamp, or the desolation of a post-apocalyptic scene.

While there are multiple ways to achieve this and similar effects, the method we will look at in particular is the approximation of volumetric light scattering as a post-process using screen-space ray marching.

In this article we will quickly step through the idea behind this algorithm, and how it is commonly used. We will then show how we can easily expand from there to create a solution that works well with multiple light sources, including some source code and images.

A link to a full example project can be found at the end of the post.

As a little spoiler, here is a quick video of the example project:

Volumetric light scattering as a post-process

This effect is the one most commonly used today. While being entirely inaccurate physically speaking, it gives very convincing and often stunning results and can be applied to any scene without much modification of the rendering pipeline.

The idea behind the algorithm is as follows:

- For each pixel on the screen, we shoot an imaginary ray towards the location of the light source.

- We sample the rendered scene a number of times along that ray.

- We combine the sample in a weighted way.

- The resulting colour value is added to the original pixel.

This simple algorithm results in bright streaks pointing away from the light. Where there is darker scene geometry, the streaks are more subdued – the respective pixels add less to the combined end value – giving the appearance of volumetric shadows.

In code, the algorithm would be implemented in the pixel/fragment shader roughly as follows.

// set up some variables

vec2 uv = pixelTextureSpace;

vec2 uvStep = (lightSourceUV - uv) / SAMPLES;

vec4 argb = vec4(0);

float decay = 1;

for (int i = 0; i < SAMPLES; i++)

{

// sample scene render target

vec4 texel = texture(renderTarget, uv);

// accumulate value

argb += texel * decay;

// march ray towards light

uv += uvStep;

// slightly decay accumulation for distance attenuation

decay *= DECAYSTEP;

}

// return weighted accumulated colour

return argb * (1 / SAMPLES);A more complete explanation of this algorithm, including the physics behind volumetric light scattering, as well as images and code of the effect, can be found in NVidias GPU Gems 3.

Multiple light sources

As the article just linked mentions, the effect can be easily applied for multiple light sources by simply repeating it – and adding up the result – for each light.

However, this is rarely done in games.

The reason for that is that to make the effect convincing and artefact free, a lot of samples along the ray have to be taken. Doing so for every pixel on the screen is expensive, so it is usually infeasible to cast volumetric rays for more than one light at the same time.

However, there is a relatively easy solution to this problem:

If we let ourself be inspired by deferred shading, specifically its ability to render a large number of lights – assuming the lights occupy relatively little screen-space, we can draw the following analogy.

If we confine our volumetric rays to small areas of the screen, we can render many of them at the same time, without too much of a performance impact. In fact, in principle, we can render many thousands of volumetric lights at the same time, if the rendered areas are just small enough. In practice, the effect is not distinguishable from a simple sprite if very small however.

Nonetheless, there exists a middle ground where the effect is large enough to be clearly observable, while being small enough to be performant.

The implementation is fairly straight forward, if we start from the fullscreen effect:

- Instead of rendering to the full screen, we create one quad per light source that is confined to the area of the screen where we want to see the effect.

- We need to make sure that the rays fade out towards the edge of the quad, which can be easily done using a linear or quadratic falloff function.

If we render all light sources using a single vertex buffer and draw call, this will make maximum use of GPU resources, and create the effect as shown in the video above.

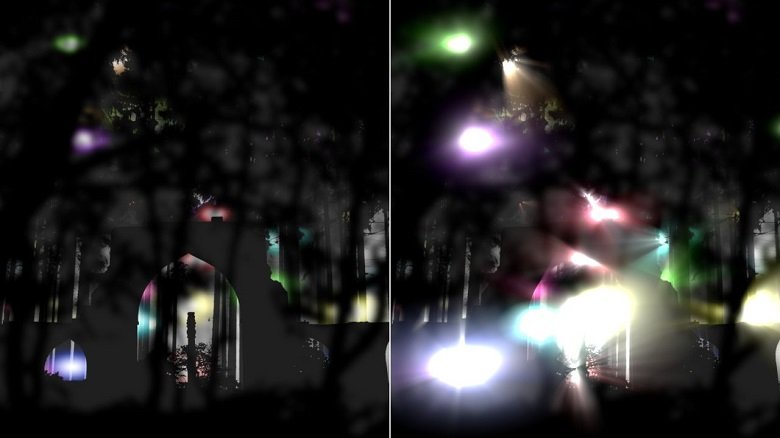

Take a look at this screenshot for a comparison with the effect off and on:

The left part of the image is considerable darker, since no effect is applied at all. We could brighten the image by rendering brighter and larger sprites for our lights, or by applying a bloom effect. However, never of them will have results as satisfying as the volumetric ray approximation on the right.

Note the rays shining through the canopy in the top part of the image, or between the buildings and underbrush in the lower center. This ray like effect, which comes to life even more when in motion can not easily be achieved using different methods.

Application in games

The example above is of course somewhat contrived and serves merely to demonstrate the effect.

For an example of where I used the effect just as described above consider Roche Fusion. Being a game full of explosions, making the latter look spectacular was very important to us when designing the aesthetic of the game.

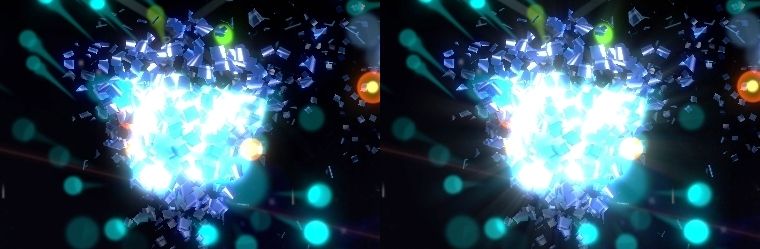

We added localised crepuscular rays to give the game’s explosions more impact and detail, without requiring any significant work on the CPU. See this excerpt of a screenshot, without volumetric rays on the left, and with on the right for a comparison:

The rays are very subtle, but enhance the sense of force and motion of the overall effect.

For a clearer example, see the exploding blue enemies in this screenshot:

Note how the rays appear to extend from between the particles of the exploding enemies.

Conclusion

I hope this small insight into a variation on the commonly used god-ray effect has been interesting.

The full code for the example project can be found on my GitHub page.

Let me know if you can think of any other possible applications of the effect, or if you have ideas for other post processing effects that can be modified in similar ways to what we did here today.

And of course, feel free to drop any questions you may have in the comments below.

Enjoy the pixels!

| Reference: | Localised Crepuscular Rays from our NCG partner Paul Scharf at the GameDev<T> blog. |